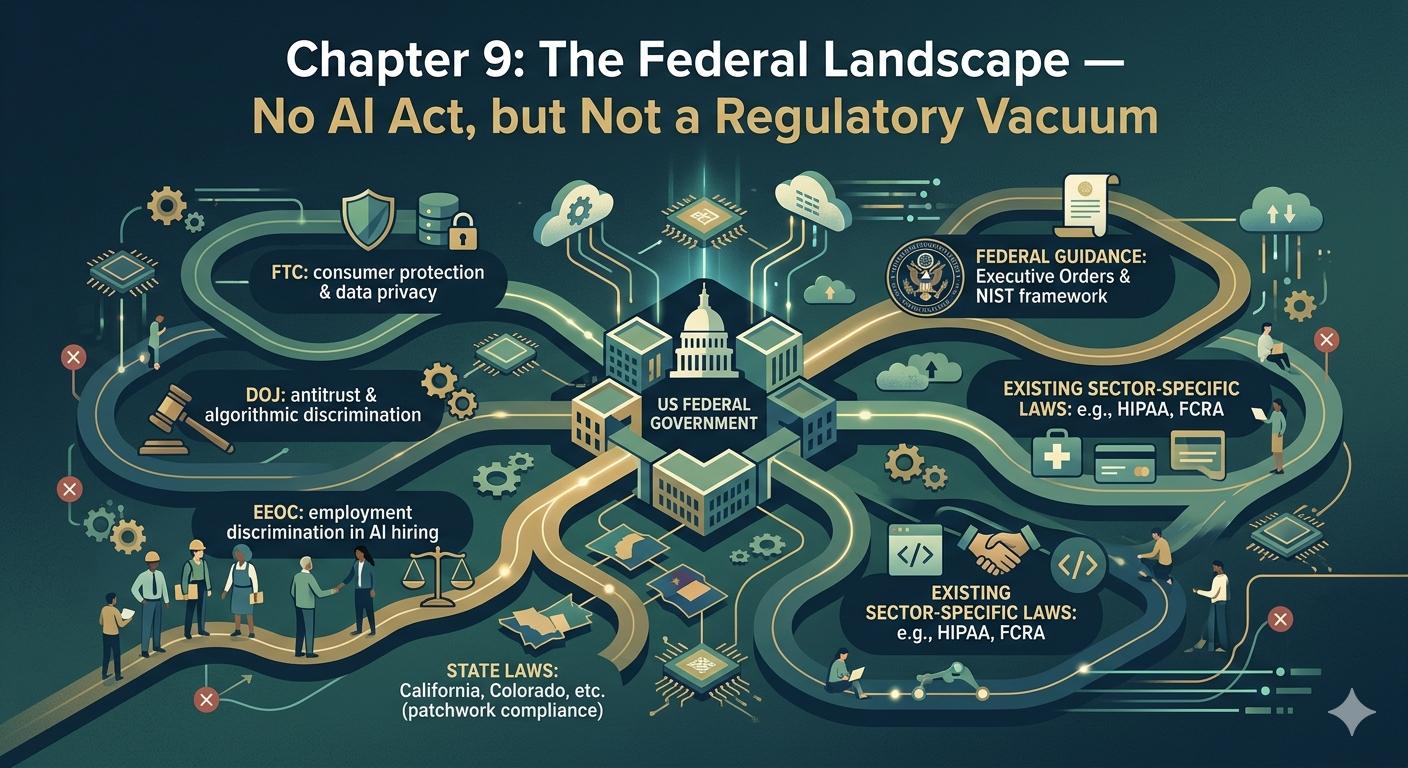

Lawyers who work in constitutional litigation learn early that the hardest cases are not the ones where the law is absent. They are the ones where the law exists but was designed for a different problem. That is precisely the situation in American AI governance at the federal level. The tools are real — consumer protection statutes, civil rights laws, administrative law, enforcement agencies with genuine authority. What is missing is not law. What is missing is architecture.

The United States does not have a comprehensive federal statute governing artificial intelligence comparable to Regulation (EU) 2024/1689. The constitutional framework we examined in the previous chapter provides the floor — the minimum protections that no government action can violate. But constitutional litigation is reactive, expensive, and uncertain. It responds to harm after it occurs. It does not prevent deployment of systems that should never have been deployed. Understanding what federal law does offer — and where its limits lie — is essential for any lawyer advising clients whose work touches the AI systems now embedded in criminal justice, immigration enforcement, employment, and consumer credit.

Executive policy — the role of presidential directives

Executive Order 14110, 88 Fed. Reg. 75191 (Nov. 1, 2023) — rescinded January 20, 2025 Executive Order 14179, 90 Fed. Reg. 8741 (Jan. 31, 2025) — currently in force

Because Congress has not enacted comprehensive AI legislation, the executive branch has been the primary driver of federal AI policy — which means that federal AI governance at the highest level has swung significantly with the change of administration in January 2025.

The Biden administration’s Executive Order 14110, signed October 30, 2023, represented the most ambitious federal AI governance effort to that point. It directed over fifty federal agencies to take more than a hundred specific actions: assessing AI-related risks in federal programs, developing guidance on responsible AI procurement, addressing potential discrimination and civil rights impacts, and requiring developers of certain powerful AI systems to share safety testing results with the federal government under the Defense Production Act. It built on the NIST AI Risk Management Framework and the Blueprint for an AI Bill of Rights, creating a government-wide architecture for responsible AI deployment.

That architecture did not survive the change of administration. On January 20, 2025 — his first day in office — President Trump rescinded E.O. 14110 as part of a broader set of executive actions. Three days later, on January 23, 2025, he signed Executive Order 14179, “Removing Barriers to American Leadership in Artificial Intelligence,” which replaced Biden’s framework with one focused on reducing regulatory friction and promoting US AI competitiveness. E.O. 14179 directed agencies to review and revise or rescind actions taken under E.O. 14110 that conflicted with the new policy, and mandated the creation of an AI Action Plan within 180 days.

For lawyers advising clients, two lessons follow. First, executive AI governance in the US is inherently contingent — it exists at the pleasure of the current administration and can be reversed overnight. Second, and more importantly for practice, the executive orders of either administration do not create enforceable individual rights. They direct agencies. They set priorities. They do not create the kind of statutory obligations that an individual or an organization can invoke in court. For that, we need to look elsewhere.

The Federal Trade Commission — consumer protection and algorithmic harm

Federal Trade Commission Act, Section 5, 15 U.S.C. § 45(a)

The most consistently active federal actor in AI accountability has been the Federal Trade Commission, whose authority derives from Section 5 of the Federal Trade Commission Act, 15 U.S.C. § 45(a), which prohibits unfair or deceptive acts or practices in commerce. That authority predates artificial intelligence by decades. Its application to algorithmic systems has developed through enforcement actions, guidance documents, and regulatory proceedings that have consistently affirmed a simple principle: the FTC’s existing consumer protection authority applies to AI, and companies cannot evade legal responsibility simply by labeling a practice “automated.”

The Commission has made clear that misleading claims about AI capabilities — overstating accuracy, concealing limitations, misrepresenting how a system reaches its outputs — constitute deceptive practices under Section 5(a). Deploying algorithmic systems that cause substantial consumer harm without adequate safeguards may constitute unfair practices under the same provision. In the AI context, the FTC has focused particularly on systems used in credit, employment, housing, and healthcare — the same domains where the EU AI Act’s Annex III high-risk classification operates.

For lawyers challenging algorithmic tools supplied by private vendors — facial recognition systems, hiring filters, credit scoring tools — FTC enforcement creates an avenue of accountability that operates independently of constitutional litigation. The standard is lower than constitutional proof of discriminatory intent. The FTC’s unfairness test asks whether a practice causes substantial consumer injury, is not outweighed by countervailing benefits, and is not reasonably avoidable by consumers. Applied to an algorithmic system producing discriminatory outcomes at scale, that test can be satisfied without proving that anyone intended to discriminate.

Civil rights enforcement — DOJ and EEOC

Title VII of the Civil Rights Act of 1964, 42 U.S.C. § 2000e et seq. Title VI of the Civil Rights Act of 1964, 42 U.S.C. § 2000d et seq. Americans with Disabilities Act, 42 U.S.C. § 12101 et seq.

The Department of Justice and the Equal Employment Opportunity Commission enforce federal civil rights statutes whose application to algorithmic systems has been progressively clarified through agency guidance and litigation. These statutes were not written with machine learning in mind. They apply nonetheless.

Title VII prohibits employment discrimination on the basis of race, color, religion, sex, or national origin. The EEOC has issued guidance confirming that algorithmic hiring tools may violate Title VII if they produce disparate impacts on protected groups — even when the discriminatory outcome results from the system’s training data rather than any explicit discriminatory design. This is the statutory route around the intent requirement that makes Fourteenth Amendment equal protection claims so difficult, as we established in the previous chapter. Under Title VII’s disparate impact theory, derived from Griggs v. Duke Power Co., 401 U.S. 424 (1971), a facially neutral employment practice that disproportionately excludes a protected group is unlawful unless the employer can demonstrate that it is job-related and consistent with business necessity.

Title VI prohibits discrimination on the basis of race, color, or national origin in programs receiving federal funding. Because many of the algorithmic systems used in criminal justice, immigration enforcement, and public benefits administration operate within federally funded programs, Title VI creates a direct avenue for challenging discriminatory algorithmic practices in those contexts. The DOJ has authority to initiate enforcement proceedings, and individuals have private rights of action under some provisions.

The ADA extends similar protection to disability status in employment and public services — relevant as algorithmic systems increasingly make or influence decisions about individuals whose protected characteristics may be inferred from data rather than disclosed directly.

Immigration and homeland security technologies

In immigration enforcement, AI systems operate within a legal environment that combines immigration statutes, administrative law, constitutional due process protections for non-citizens, and the specific authorities of agencies within the Department of Homeland Security. The systems we will examine in depth in Module 5 — ImmigrationOS, the Hurricane Score, the Risk Classification Assessment — operate within this complex framework.

What is particularly important from a federal law perspective is the interaction between administrative law and algorithmic decision-making. The Administrative Procedure Act, 5 U.S.C. § 553 et seq., governs federal agency rulemaking and provides individuals with rights to challenge agency action that is arbitrary, capricious, or contrary to law. When an agency deploys an algorithmic tool to make or influence decisions affecting individual liberty — detention, removal, visa denial — and that tool operates without adequate transparency, the APA provides a potential basis for challenge that does not require proving constitutional violations.

The argument is essentially this: a decision is arbitrary and capricious when the decision-maker cannot explain the basis for it. If an immigration officer approves an algorithmic recommendation without understanding how the system reached its output or being able to articulate the reasoning to the affected individual, the decision may not survive APA review. We will examine how this argument has been deployed in specific immigration AI litigation in the chapters that follow.

Section 1983 — suing the government for algorithmic rights violations

42 U.S.C. § 1983

For challenges to algorithmic decisions by state and local government actors — which includes most criminal justice AI deployments, since police, prosecutors, and courts operate at the state and local level — 42 U.S.C. § 1983 is the primary litigation vehicle. Section 1983 allows individuals to bring civil lawsuits against state or local officials who violate constitutional rights under color of law.

The practical significance of Section 1983 in AI litigation is substantial. It creates a federal cause of action for constitutional violations by state actors that can be brought in federal court, with fee-shifting provisions that make litigation viable for plaintiffs who could not otherwise afford to challenge government algorithmic decisions. Fourth Amendment surveillance claims, Fifth Amendment due process challenges to opaque algorithmic sentencing or detention tools, and Fourteenth Amendment equal protection claims against discriminatory risk assessment systems can all be brought under Section 1983 against the officials who deployed and relied on those systems.

The limits of Section 1983 are equally important to understand. It applies to state actors, not private companies. It requires proof of constitutional violations — which means the intent problems we identified with equal protection claims apply here as well. And qualified immunity doctrine, which shields government officials from liability unless they violated clearly established law, has historically made Section 1983 AI claims difficult to sustain in novel contexts where the constitutional rules are not yet settled.

The structural gap compared to the EU

The contrast with the European framework is most visible at the level of prevention. The EU AI Act requires conformity assessments, risk management systems, technical documentation, and human oversight mechanisms before high-risk systems enter deployment. The US federal framework responds to harm after it occurs — through FTC enforcement actions, civil rights investigations, constitutional litigation, and administrative law challenges.

This is not simply a regulatory philosophy difference. It has concrete consequences for lawyers and for clients. In the EU, a lawyer can advise a deployer on the compliance obligations they must meet before launching a high-risk system, and point to specific articles that define those obligations. In the US, the same lawyer advises the same deployer on the litigation risks they accept by deploying a system whose outputs they cannot fully explain or whose discriminatory effects they cannot fully quantify — because the legal accountability will come, if it comes at all, after the harm has already occurred and been documented.

The gap is not permanent. State legislatures — particularly in New York, which we examine in the next chapter — have begun filling it with specific AI obligations that the federal framework has not yet provided. And the interaction between state law, federal enforcement authority, and constitutional litigation creates a more complex regulatory environment than either the fragmentation criticism or the “regulatory vacuum” description suggests.

A fragmented but real framework

The United States federal AI governance landscape in 2026 is fragmented, politically contingent, and predominantly reactive. It is also, for the lawyer who understands it, genuinely usable. The FTC’s consumer protection authority, the DOJ and EEOC’s civil rights enforcement powers, the APA’s constraints on arbitrary agency action, and Section 1983’s cause of action for constitutional violations together cover a significant portion of the territory that the EU AI Act addresses through its compliance architecture.

What they do not provide is a unified framework, a risk classification system, mandatory pre-deployment obligations, or a clear hierarchy of authority. What they require instead is a lawyer who can identify which tool applies to which harm, build arguments across multiple legal frameworks simultaneously, and understand the difference between what the law prohibits and what it can actually prove in litigation.

That is the skill set this book is designed to build — starting with the most developed state-level framework in the country.

Leave a Reply